Vehicles that navigate their way through everyday traffic on their own, while the driver works on a laptop or relaxes in the back seat: These images are still dreams of the future. Even if no fully autonomous vehicle has yet been approved for use on the roads, car manufacturers are increasingly installing driver assistance systems as standard. Examples include emergency brake assists or sign recognition systems - which must also function reliably in difficult weather conditions.

Assistance systems work on the basis of camera images. Sensors installed around the vehicle provide these images. They thus deliver high-quality results and make driving safer - at least in theory. In everyday life, however, this is not so easy: If the camera lens is contaminated by dirt or dust, or if there are bad weather conditions such as rain, fog or snow, the software does not receive a clear image. As a result, important information about the surroundings may be missing, and the assistance system may provide incorrect or incomplete information.

But it is precisely under poor ambient conditions that the driver needs the reliable support of the assistance systems. The solution to this challenge: our AI-based image editing software modules. These can, for example, detect dirt on the camera lens of the sensor and reconstruct the image areas obscured by it or remove fog from images. Thanks to the software modules, assistance systems receive high-quality images without any loss of information, even under difficult environmental conditions - drivers can therefore rely on the flawless functioning and support of the installed systems.

But how do the systems work and how did EDAG develop the image editing software? A closer look provides exciting insights into the world of AI-supported software.

SmoFod provides support for risky fog drives

One of the image editing software used is called Smog and Fog Dehazer (SmoFod). It removes fog from images using artificial intelligence (AI). Fog droplets cause colors to fade and sharpen contrasts. The consequence for drivers: their visibility deteriorates. Depending on the strength of the fog, differences in color and contrast of distant objects can hardly be seen by humans and are therefore no longer discernible.

How does SmoFod help here? Let's first take a look at the camera images: When a vehicle drives through fog, the onboard cameras record digital images. These consist of three channels (red, yellow and blue). Each channel consists of individual pixels that represent the intensity value of the color at the specific image location. The image can thus be resolved and analyzed in a very granular manner. This is where the image processing software comes in: the SmoFod analyzes minute differences in pixel values and modifies them to produce a clear image. The fog is calculated out of the image.

SmoFod: Cleverly built on existing solutions

In 2017, we at EDAG started developing the SmoFod image editing software. An end-to-end system for removing haze in single images caught our attention: the DehazeNet by Bolun Cai et al.1 It formed the basis of our development from then on. The DehazeNet follows the theory that the light transmission factor of the haze in a very small image section is identical for each pixel in that section. Therefore, the approach taken was to train a network that determines this factor for a small section of the image. Clippings of size 16x16 pixels were extracted from various images to generate an image dataset. These clippings were synthetically fogged - a randomly generated light transmittance value was used for this purpose. With this image data set, the development team trained the network: It receives as input an obfuscated 16x16 image section and determines the light transmission factor. This is then compared with the correct, known factor - so the network is constantly learning.

Reconstruction of a clear image

Now, in the application for later use on the vehicle, however, we do not need the light transmission factor, but the demisted image. As early as 1924, Koschmieder developed a formula by which the horizontal visibility can be calculated.2 By transforming this model, we were able to calculate the demisted image.

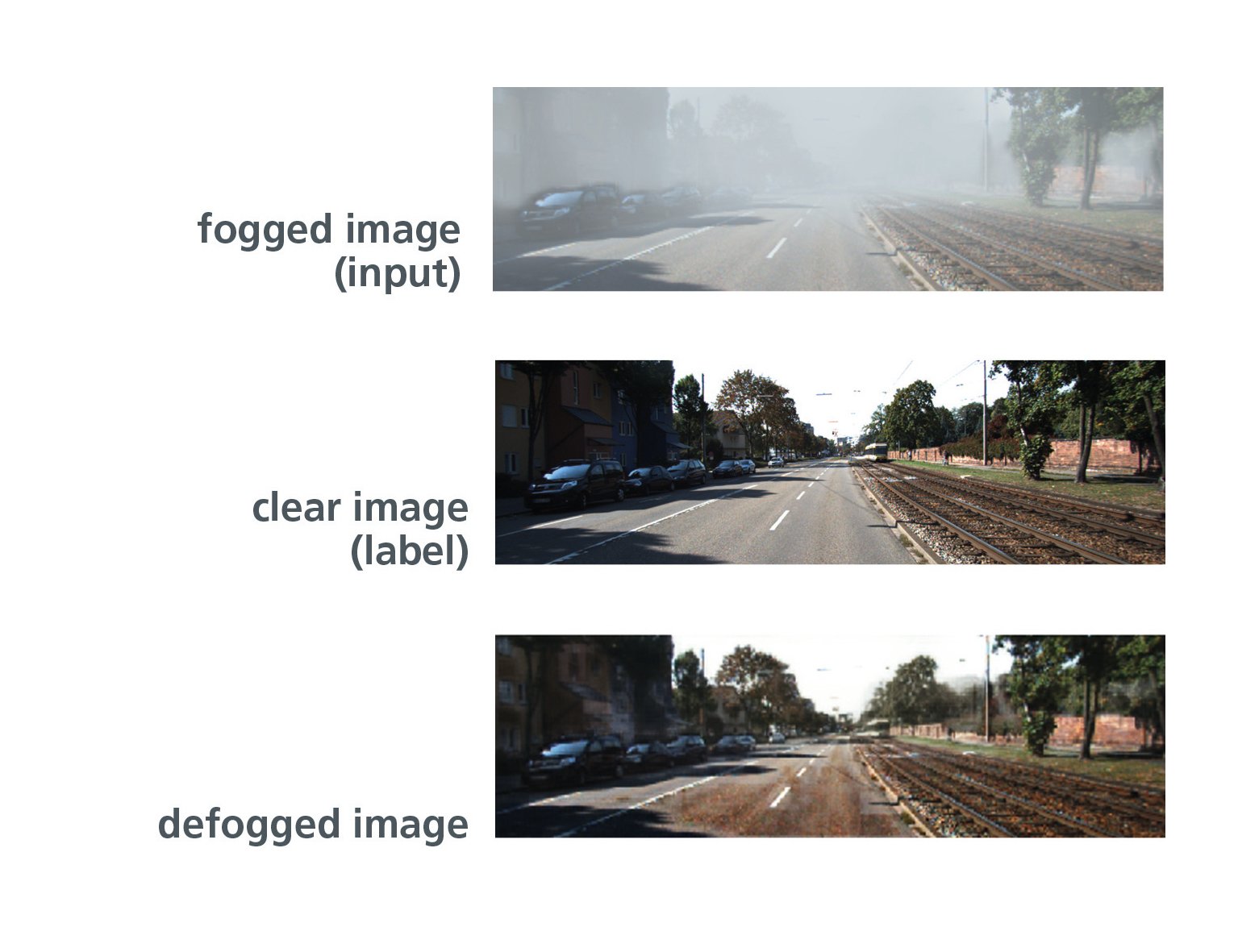

If a 16x16 kernel is now glided over the input image, the light transmission value is determined for each image section with the help of the trained mesh. Using these values and Koschmieders model, the original color values of the pixels can now be calculated and a clear image reconstructed. Thus, the model was able to de-fog fogged images. However, this revealed another problem that had to be solved: colors were distorted and artifacts appeared in the image.

Finding creative solutions: Cleverly removing obstacles

At the same time as EDAG was developing SmoFod, Hinton et al. published a paper on a novel network architecture called "Capsule Neural Network" . This network architecture appeared promising and should help overcome the last obstacles EDAG was still facing in image denoising.

Let's first take a brief look at how an "ordinary" CNN works: a CNN extracts features from the input by running a filter over the image. The entries of the filter are learned. Thus, feature extraction can be controlled in such a way that it is maximally helpful for an optimal result. Not all neurons of one layer are used for the computation of a neuron in the next layer, but only a small part.

Explained with an example: given an image of a person, features such as eyes, nose and mouth can be extracted. If the face is tilted, the same features are extracted in a tilted position - this is where the Capsule Neural Network (CapsNet) comes in. It stores additional information about the features, in the case described, for example, the inclination of the head. This reduces the number of different features and increases performance. Another feature that CapsNet brings new to the world of Deep Learning is the so-called routing-by-agreement. This passes information only to those neurons in the next layer that are best represented by those features.

CapsNet and SmoFod: A winning combination

Now the task was to integrate this CapsNet functionality into our SmoFod - with the goal of improving image reconstruction. Reconstruction tasks are often performed by autoencoders - and so we developed a novel architecture based on an autoencoder with the features of CapsNet. The autoencoder was thus able to store additional information about the extracted features and decide which neurons should receive which information.

It works: EDAG's image processing software not only defogs images, but ensures that the colors of the reconstructed image are nearly identical to those of the input image. There is no oversaturation of colors and the resulting image has no artifacts. SmoFod is to be used for driver assistance systems. That is why the performance of downstream systems on the result images is crucial for the evaluation of the network. So simply put, we had to address the question: Can SmoFod hold up in practical testing in interaction with driver assistance systems?

Can SmoFod hold its own in practical use?

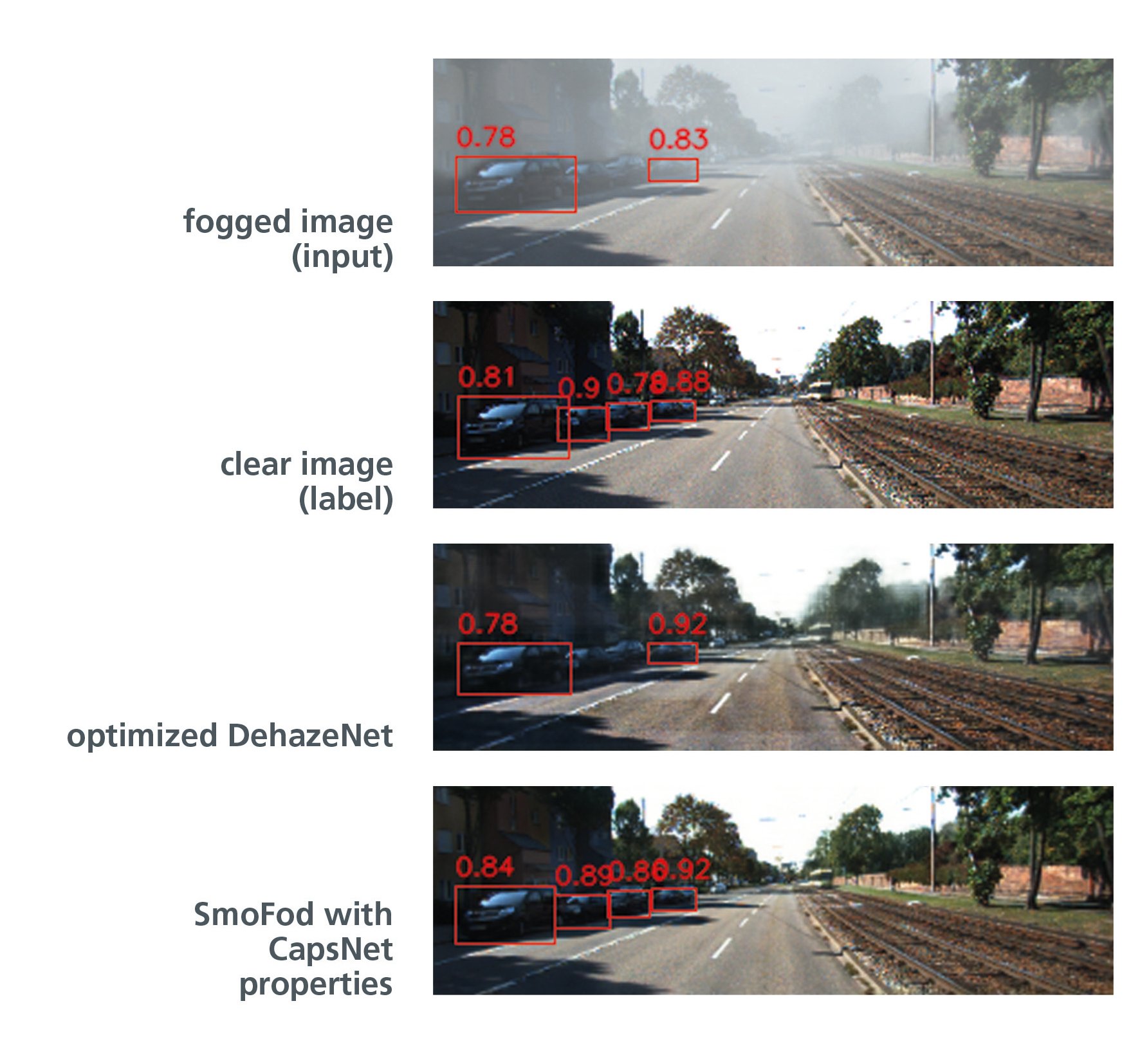

Using the object classifier YOLO (You Only Look Once), our results were tested for the use case. YOLO simultaneously detects all image areas of interest and classifies them with a score.

The images below show how YOLO assigns a red bounding box and the corresponding recognition rates to the detected vehicles. The fogged image has the worst detection rates. However, the detection rates are hardly improved by demisting using the optimized DehazeNet - although the reconstructed image appears visually much clearer than the fogged one. In contrast, the recognition rates in the image defogged using the novel autoencoder are excellent. Some even show higher values than the original.

In concrete terms, this means that our SmoFod image editing software works superbly in the enhanced version. The driver assistance system receives images without any loss of information - and the driver receives a system that supports him safely even in bad weather conditions.

Our team in Lindau/ Ulm is working intensively on tasks in the areas of Deep Learning and Computer Vision. If you are interested in these technologies or in further application areas of AI, Nathalie Klingler, Software Developer AI, will be happy to help you. For more information, download her whitepaper "Optimisation of the SmoFod using a Capsule Neural Network".

1 Bolun Cai et al. Dehazenet: An End-to-End System for Single Image Haze Removal. CoRR, abs/1601.07661, 2015.

2 Stephan Lenor. Model-Based Estimation of Meteorological Visibility in the Context ”of Automotive Camera Systems “Dissertation, Heidelberg University, 2016.